Apache Spark is a free, open-source, general-purpose framework for clustered computing. It is specially designed for speed and is used in machine learning to stream processing to complex SQL queries. It is capable of analyzing large datasets across multiple computers and processes the data in parallel. Apache Spark provides APIs for multiple programming languages including Python, R, and Scala. It also supports higher-level tools including GraphX, Spark SQL, MLlib, and more.

In this post, we will show you how to install and configure Apache Spark on Debian 12.

Step 1 – Install Java

Before starting, you will need to install Java to run Apache Spark. You can install it using the following commands:

apt-get update -y

apt-get install default-jdk -y

After installing Java, verify the Java installation using the following command:

java --version

You should see the following output:

openjdk 17.0.16 2025-07-15 OpenJDK Runtime Environment (build 17.0.16+8-Debian-1deb12u1) OpenJDK 64-Bit Server VM (build 17.0.16+8-Debian-1deb12u1, mixed mode, sharing)

Step 2 – Install Apache Spark

First, you will need to download the latest version of Apache Spark from its official website. You can download it with the following command:

wget https://dlcdn.apache.org/spark/spark-4.0.0/spark-4.0.0-bin-hadoop3.tgz

Once the download is completed, extract the downloaded file with the following command:

tar xvf spark-4.0.0-bin-hadoop3.tgz

Next, move the extracted directory to /opt:

mv spark-4.0.0-bin-hadoop3 /opt/spark

Next, you will need to define an environment variable to run Spark.

You can define it inside the ~/.bashrc file:

nano ~/.bashrc

Add the following line:

export SPARK_HOME=/opt/spark export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin

Save and close the file, then activate the environment variable with the following command:

source ~/.bashrc

Step 3 – Start Apache Spark Cluster

At this point, Apache spark is installed. You can now start the Apache Spark using the following command:

start-master.sh

You should get the following output:

starting org.apache.spark.deploy.master.Master, logging to /opt/spark/logs/spark-root-org.apache.spark.deploy.master.Master-1-debian10.out

By default, Apache Spark listens on port 8080. You can check it with the following command:

ss -tunelp | grep 8080

You should get the following output:

tcp LISTEN 0 1 *:8080 *:* users:(("java",pid=5931,fd=302)) ino:24026 sk:9 v6only:0 <->

Step 4 – Start Apache Spark Worker Process

Next, start the Apache Spark worker process with the following command:

start-worker.sh spark://debian:7077

You should get the following output:

starting org.apache.spark.deploy.worker.Worker, logging to /opt/spark/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-debian10.out

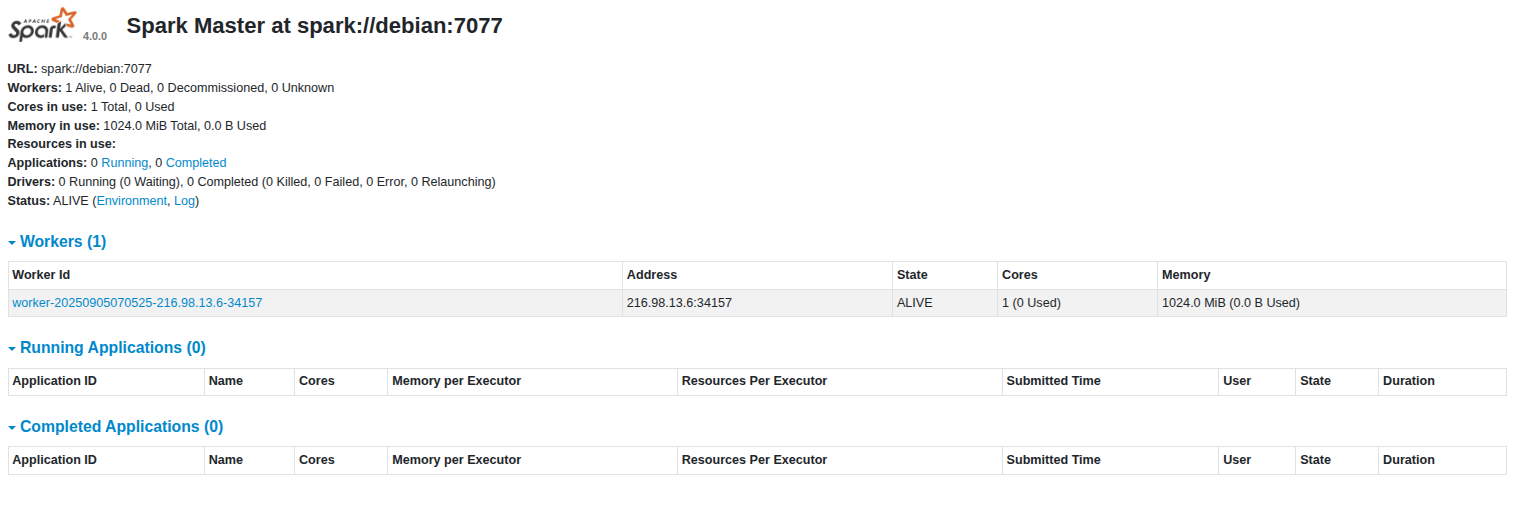

Step 5 – Access Apache Spark

You can now access the Apache Spark web interface using the URL http://your-server-ip:8080. You should see the Apache Spark dashboard on the following screen:

Step 6 – Access Apache Spark Shell

Apache Spark also provides a command-line interface to manage Apache Spark. You can access it using the following command:

spark-shell

Once you are connected, you should get the following shell:

Using Spark's default log4j profile: org/apache/spark/log4j2-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 4.0.0

/_/

Using Scala version 2.13.16 (OpenJDK 64-Bit Server VM, Java 17.0.16)

Type in expressions to have them evaluated.

Type :help for more information.

25/09/05 07:06:33 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Spark context Web UI available at http://debian:4040

Spark context available as 'sc' (master = local[*], app id = local-1757055995938).

Spark session available as 'spark'.

scala>

If you want to stop the Apache Spark cluster, run the following command:

stop-master.sh

To stop the Apache Spark worker, run the following command:

stop-worker.sh

Conclusion

Congratulations! You have successfully installed and configured Apache Spark on Debian 12. This guide will help you to perform basic tests before you start configuring a Spark cluster and performing advanced actions. Try it on your dedicated server today!

* This post is for informational purposes only and does not constitute professional, legal, financial, or technical advice. Each situation is unique and may require guidance from a qualified professional.

Readers should conduct their own due diligence before making any decisions.