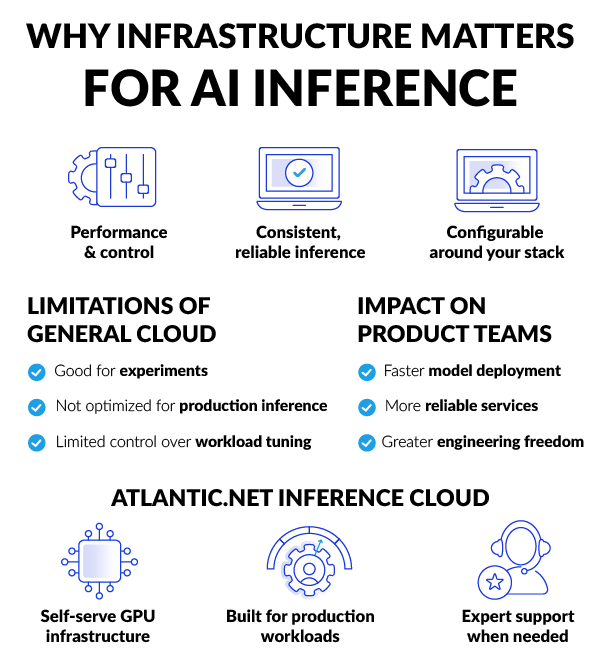

Self-serve infrastructure for customer-managed AI inference

Atlantic.Net Inference Cloud is a self-serve GPU cloud platform designed for teams that need dedicated GPU infrastructure for inference workloads.

It enables engineering teams to deploy and manage their own AI models without relying on a fully managed platform, giving more control over runtime, scaling, and deployment architecture.

Atlantic.Net provides the H100-powered infrastructure layer, while your team manages the model, runtime, and supporting services required for production environments.

You can start quickly with a self-serve setup, and scale into more advanced, custom deployment configurations as your infrastructure needs grow.