Table of Contents

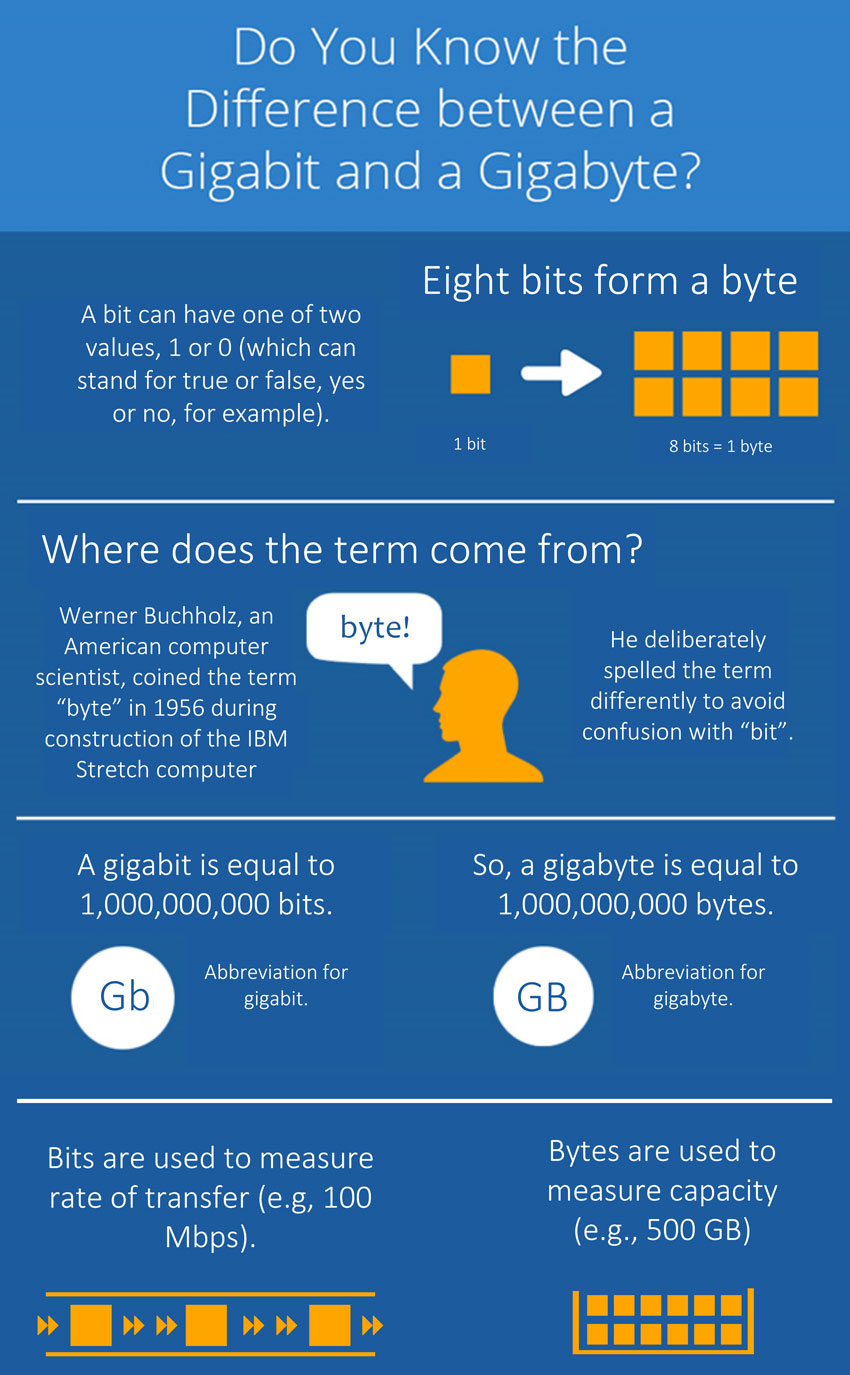

Many people confuse the terms “gigabit” and “gigabyte” by either using them synonymously or confusing their meanings. While both are units of measurement describing digital data, how much they measure and how they are used are different. We explain these different meanings to define them clearly.

A Bit

A bit is the most basic unit used in computing and telecommunications. A bit is a binary unit, meaning it can have one of two values: a 1 or a 0. In computers, this value can indicate expressions such as “true” or “false,” “yes” or “no,” “come hither,” or “ain’t gonna happen.” (Just kidding with that last one!)

A Byte

A byte is 8 bits*. Werner Buchholz, an American computer scientist, coined the term “byte” in 1956 during the construction of the IBM Stretch computer. He deliberately spelled the term differently to avoid confusion with the term “bit.”

The Difference

When we need to refer to numbers of bits or bytes as those numbers get larger and larger, we use the prefixes from the metric system (see table below for examples). To distinguish between the two when abbreviating them, the lower-case “b” traditionally represents “bit”, whereas the upper-case “B” represents “byte”.

| prefix | multiplier† | bits-to-bytes | bytes-to-bits |

|---|---|---|---|

| kilo- (K) | 1,000x | 1Kb = 125B | 1KB = 8Kb |

| mega- (M) | 1,000,000x | 1Mb = 125KB | 1MB = 8Mb |

| giga- (G) | 1,000,000,000x | 1Gb = 125MB | 1GB = 8Gb |

| tera- (T) | 1,000,000,000,000x | 1Tb = 125GB | 1TB = 8Tb |

They are also different in how they are used as units of measurement. Bits are generally used when measuring the rate of data transfer. When we talk about network throughput (or what is often called “bandwidth”) or internal data transfer (such as in describing SATA or USB speeds), we use megabits per second or gigabits per second.

Bytes are generally used when describing data capacity. We measure the sizes of our files and the hard drives that store them in gigabytes and terabytes (and, perhaps soon, petabytes!).

At Atlantic.Net, you do not have to worry about staying up-to-date with confusing terminology because our expert technicians will handle all of the work for you! To learn more about our cloud hosting services, contact our team of hosting professionals today by calling 1-800-521-5881. Right now, we are offering dedicated server hosting plans from just $64 a month every month and no contractual commitments.

* Fun Fact: 4 bits, or half a byte, is called a nibble. It’s rarely used, but it’s official!

† Kind of. In actual usage, particularly in measurements of RAM or hard disk space, the metric prefixes aren’t decimal-based but binary-based. This table shows the differences between these two types of calculations.

| prefix | decimal | multiplier | binary | multiplier |

|---|---|---|---|---|

| kilo- (K) | 103 | 1000x | 210 | 1024x |

| mega- (M) | 106 | 1,000,000x | 220 | 1,048,576x |

| giga- (G) | 109 | 1,000,000,000x | 230 | 1,073,741,824x |

| tera- (T) | 1012 | 1,000,000,000,000x | 240 | 1,099,511,627,776x |

You can see how that numbering scheme can grow to be confusing!

Learn more about our HIPAA compliant web hosting and HIPAA cloud hosting solutions.

Explore our HIPAA compliance guide.

* This post is for informational purposes only and does not constitute professional, legal, financial, or technical advice. Each situation is unique and may require guidance from a qualified professional.

Readers should conduct their own due diligence before making any decisions.